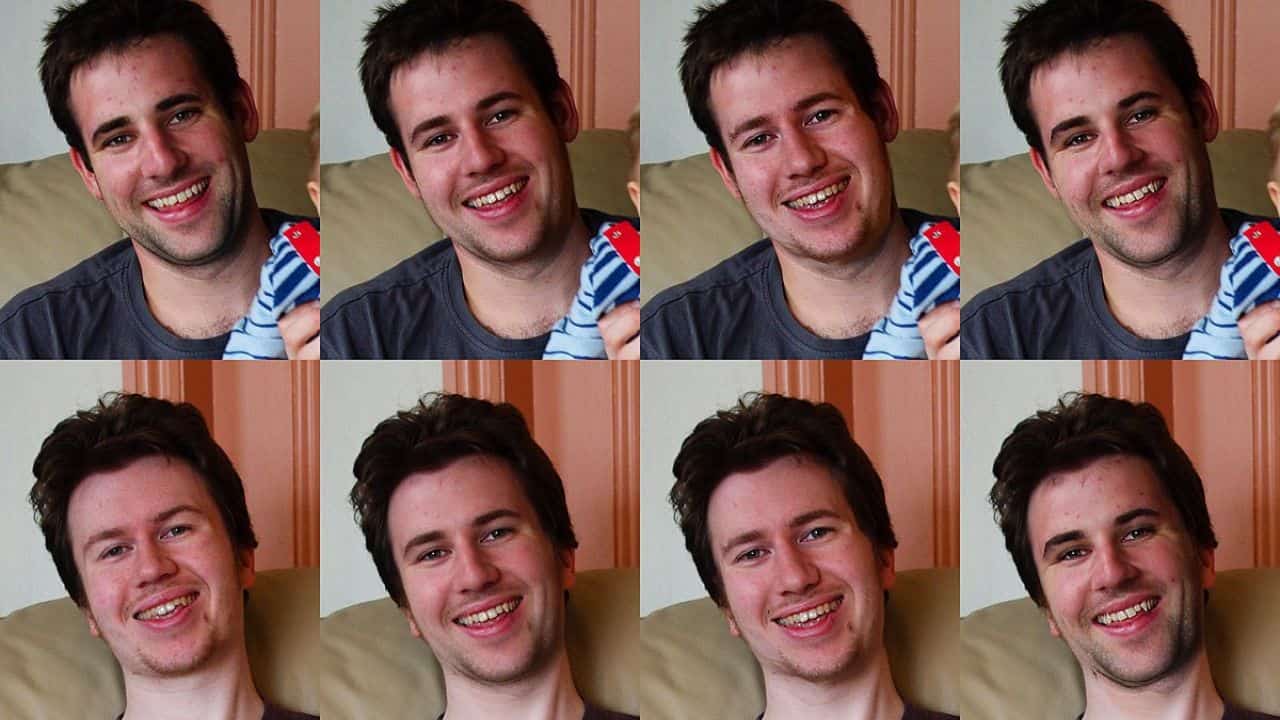

Have you ever seen Barack Obama call Donald Trump a “total idiot” or Mark Zuckerberg say “billions were stolen?” Did you see how Jon Snow bragged about having complete control over his data, or how he moved toward the dark ending of Game of Thrones? If you answer “yes”, you have seen a deepfake.

In the 21st century perfect photoshopping are called deepfakes because they use a type of artificial intelligence called deep learning to create images of fake events. Want to hear new words from the mouths of politicians, star in your favorite movies, and dance like a pro? Next, create a deepfake.

What is it used for?

Many are pornographic. AI company Deeptrace discovered 15,000 deepfake videos online in September 2019, which has nearly doubled in nine months. A whopping 96% were pornographic, and 99% of them featured faces ranging from female celebrities to porn stars.

Fake videos are likely to spread beyond the celebrity world and fuel revenge porn, as new technology allows even untrained people to create deepfakes using just a few photos. As Daniel Citron, a law professor at Boston University, puts it, “Deepfake technology is being used as a weapon against women.” Beyond pornography, there’s plenty of parody, satire, and nonsense.

How is it made?

Video and image modification capabilities have long been explored by university researchers and special effects studios. However, the deepfake itself was born in 2017, when a Reddit user of the same name posted a doctored pornographic clip of him on the site. The video replaced the faces of celebrities such as Gal Gadot, Taylor Swift and Scarlett Johansson with porn actors.

Who makes deepfakes?

Everyone, including visual effects studios, porn producers, amateur hobbyists, and experts from academia and industry. Governments can also use this technology as part of their online strategies, for example to discredit and disrupt extremist groups or to contact targeted individuals.

What technology is needed?

It’s difficult to create good deepfakes on a standard computer. Most are built on high-end desktops with powerful graphics cards, or better yet, the computing power of the cloud. This reduces processing time from days or weeks to hours. However, it also requires expertise, such as retouching the finished video to reduce flickering and other visual defects. However, there are now many tools that can be used to create deepfakes. Several companies create them and do all the processing in the cloud. There’s also a mobile app called Zao that lets you add your face to the list of TV and movie characters the system has trained on.

How to identify deepfakes?

It gets more difficult as technology develops. US researchers found in 2018 that the blinking patterns of deepfake faces are abnormal. Seems sense. Since most photos feature individuals with their eyes open, the algorithm isn’t actually taught how to blink. It appeared to be a panacea for the detection issue at first. But no sooner had the research been published, than deepfakes appeared with blinking. Such is the nature of the game: as soon as a weakness is revealed, it is fixed.

Deepfakes of lower grade are simpler to identify. There could be uneven skin tone or poor lip synchronization. Transposed faces may exhibit flashing at their edges. Also, small details such as hair are particularly difficult to display well in deepfakes, especially when bangs are visible. Poorly rendered jewelry or teeth can also be clues, as can odd lighting effects such as uneven lighting or reflections on the iris.

Governments, universities, and technology companies are all funding research to detect deepfakes. The first deepfake detection challenge was launched last month and was supported by Microsoft, Facebook, and Amazon. This will involve research teams from around the world vying for an edge in the deepfake detection game.

What technology is needed?

It’s difficult to create good deepfakes on a standard computer. Most are built on high-end desktops with powerful graphics cards, or better yet, the computing power of the cloud. This reduces processing time from days or weeks to hours. However, it also requires expertise, such as retouching the finished video to reduce flickering and other visual defects.

Furthermore, there are now many tools that can be used to create deepfakes. Several companies create them and do all the processing in the cloud. There’s also a mobile app called Zao that lets you add your face to the list of TV and movie characters the system has trained on.

How you can spot a deepfake

The more technology advances, the harder it becomes. In 2018, The researchers in United States discovered that deepfake faces do not blink normally. No wonder, most images show people with their eyes open, so the algorithm doesn’t actually learn how to blink.

At first, it seemed like a silver bullet to the detection problem. But as soon as the research was published, deepfakes appeared in the blink of an eye.

Poor-quality deepfakes are easier to spot. One can spot the deepfake by watching through the lip synching which might be bad, or the skin tone patchy. Also, staring at the image with sharp eyes you will find that there is a flickering around the edges of transposed faces.

Also, small details such as hair are particularly difficult to display well in deepfakes, especially when bangs are visible. Poorly rendered jewelry or teeth can also be clues, as can odd lighting effects such as uneven lighting or reflections on the iris.

Governments, universities, and technology companies are all funding research to detect deepfakes. The first deepfake detection challenge was launched last month and was supported by Microsoft, Facebook, and Amazon. This will involve research teams from around the world vying for an edge in the deepfake detection game.

What’s the solution?

Ironically, AI could be the answer. Artificial intelligence is already helping detect fake videos, but many existing detection systems have significant weaknesses. It is most effective for celebrities because they can train with hours of freely available footage. Technology companies are currently working on detection systems aimed at identifying counterfeit products as soon as they appear.

Another strategy focuses on the source of the media. Although digital watermarks are not foolproof, blockchain’s online ledger system can store tamper-proof records of videos, images, and audio, allowing their origin and tampering to be verified at any time.